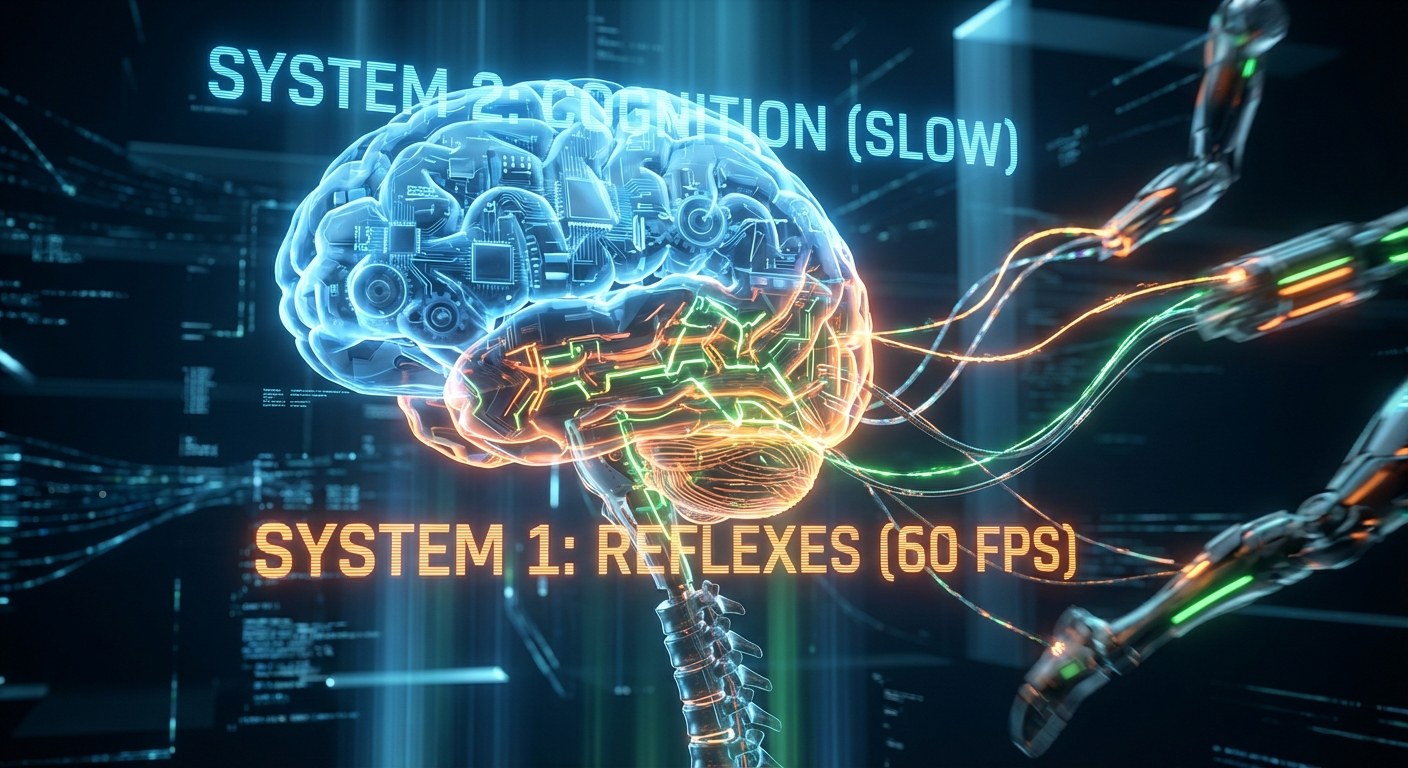

The Dual‑Process Architecture splits an AI model into two layers. System 2 is a heavyweight large language model—such as Gemma 3, Llama or GPT‑4—that generates strategic vectors over several seconds. System 1 is a lightweight custom network that handles requests 60 times per second, cutting response time from the typical one to three seconds down to under 16 ms while maintaining a steady 60 FPS.

Instantaneous feedback reshapes interactions with AI‑NPCs: blendshapes and joint movements occur without jitter, and users immediately perceive a "live" reaction. The resulting lift in Net Promoter Score and retention opens the door to monetizing each conversation as paid content or a subscription service without sacrificing quality.

For game development studios, Dual‑Process promises up to a 30 % reduction in inference costs and an additional $5–$10 added to average revenue per user per session. In robotics, rapid processing of sensor data without a heavy core unlocks new verticals—from virtual assistants to industrial companions—potentially doubling the total addressable market by 2028.

Why this matters now: the first pilot integration already shows cost savings and an increase in ARPU. Start with a limited test in a single game or robot line, measure latency reduction and retention gains, then scale the solution across your entire portfolio.